CoRL 2023

Large Vision-Language Models as Embodied Agents

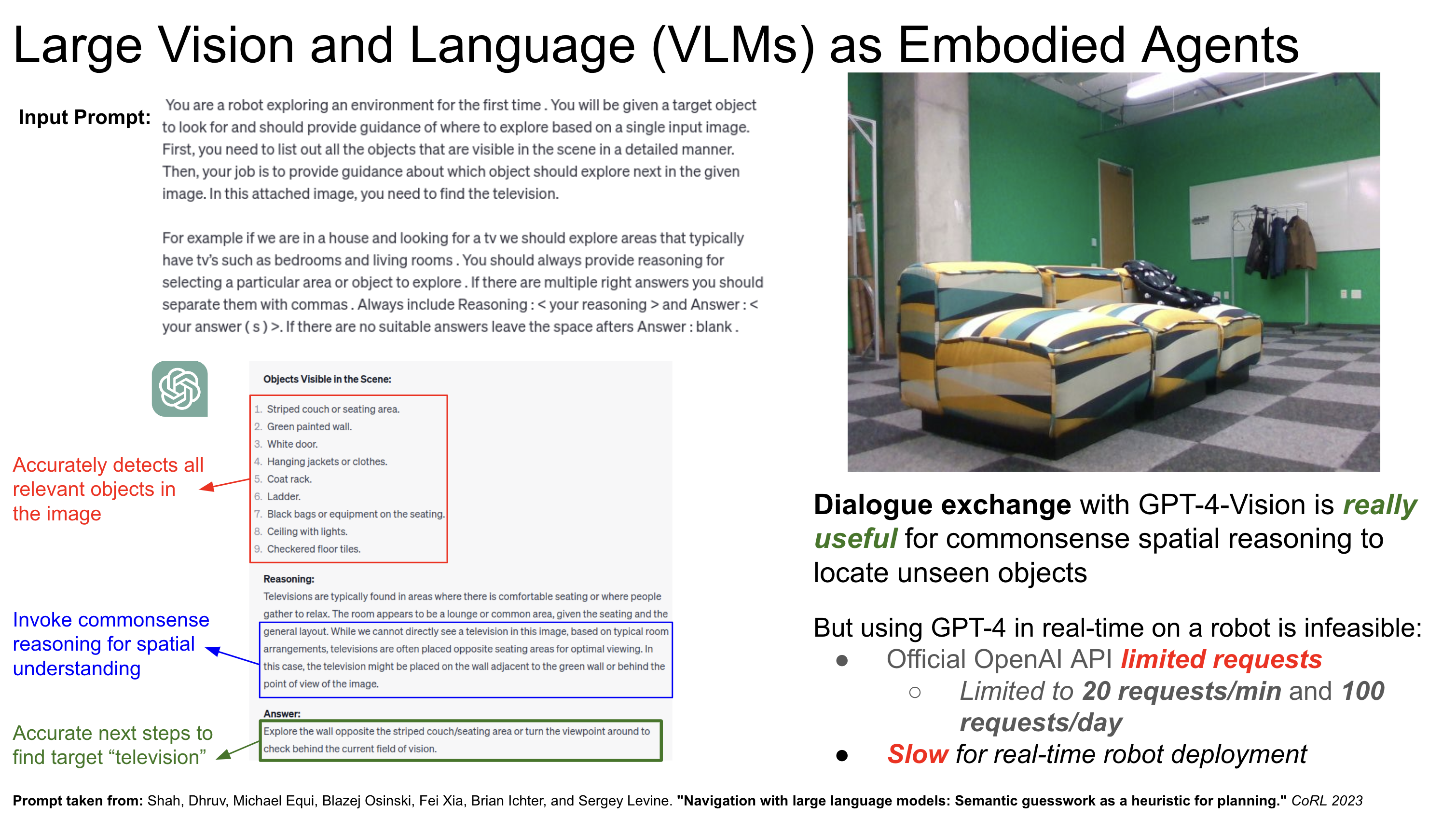

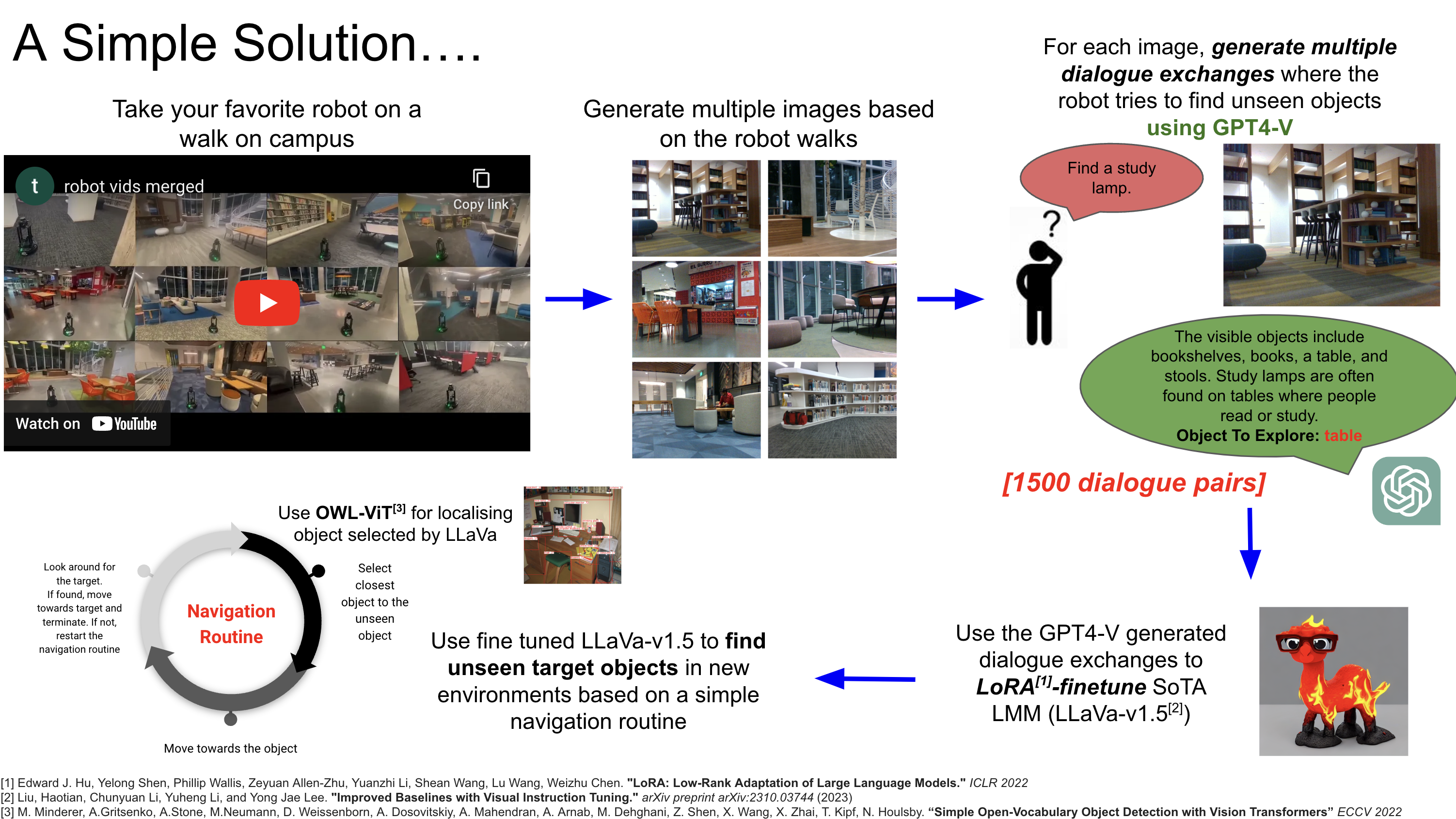

In this project, we showcase the effectiveness of using a language-driven module for the task of open-vocabulary object navigation in a never-before-seen, real-world environment on a real LoCoBot. Specifically, we finetune a recent large VLM (LLaVa-v1.5) to efficiently select subgoals which lead to the query open-vocabulary object. The LLaVa-v1.5 model is finetuned using LoRA on a dataset of GPT-4V generated multi-turn dialogue exchanges to find target objects in real world images collected by the robot. Our method uses natural language inputs to guide the robot to find new objects and leverages open-vocabulary detectors such as OWL-ViT for reliable navigation routines.

Left: Existing issue in leveraging VLMs for embodied navigation ; Right : Overall pipeline of our proposed solution

1x Demonstration video of running LoRA finetuned LLaVa-v1.5 online on real robot (Model inference offloaded to cluster in real-time)